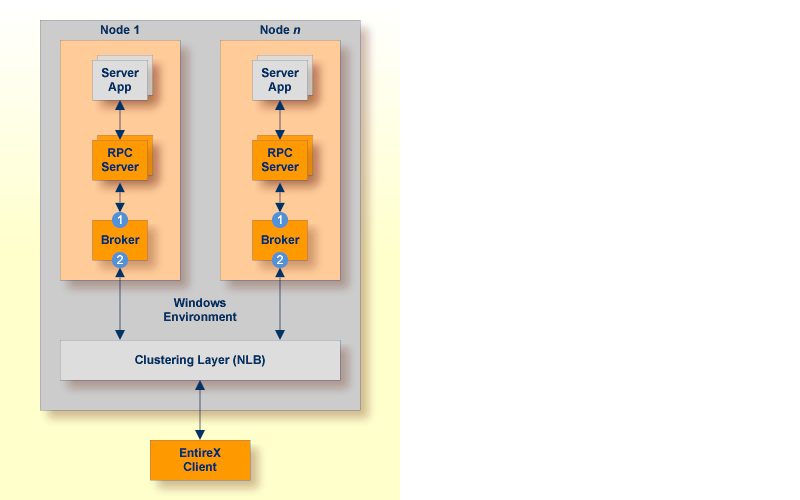

Scenario: "I want to use Windows NLB for my high availability cluster."

Segmenting dynamic workload from static server and management topology is critically important. Using broker TCP/IP-specific attributes, define two separate connection points:

One for RPC server-to-broker and admin connections.

The second for client workload connections.

See TCP/IP-specific Attributes. Sample attribute file settings:

| Sample Attribute File Settings | Note | |

|---|---|---|

|

PORT=1972 |

In this example, the HOST is not defined, so the default setting will be used (localhost).

|

|

HOST=10.20.74.103 (or DNS) PORT=1811 |

In this example, the HOST stack is the virtual IP address. The PORT will be shared by other brokers in the cluster.

|

We recommend the following:

Share configurations - you will want to consolidate as many configuration parameters as possible in the attribute setting. Keep separate yet similar attribute files.

Isolate workload listeners from management listeners.

The network load balancing service for all the machines should have the correct local time. Ensure the Windows Time Service is properly configured on all hosts to keep clocks synchronized. Unsynchronized times will cause a network login screen to pop up which doesn't accept valid login credentials.

You have to manually add each load balancing server individually to the load balancing cluster after you've created a cluster host.

To allow communication between servers in the same NLB cluster, each server requires the following registry entry: a DWORD key named "UnicastInterHostCommSupport" and set to 1, for each network interface card's GUID (HKEY_LOCAL_MACHINE\System\CurrentControlSet\Services\WLBS\Parameters\Interface{GUID})

NLB may conflict with some network routers, which are not able to resolve the IP address of the server and must be configured with a static ARP entry.

Monitor brokers through Command Central.

In addition to broker redundancy, you also need to configure your RPC servers for redundant operations. We recommend the following best practices when setting up your RPC servers:

Make sure your definitions for CLASS/SERVER/SERVICE are identical

across the clustered brokers. Using identical service names will allow the

broker to round-robin messages to each of the connected RPC server instances.

For troubleshooting purposes, and if your site allows this, you can optionally use a different user ID for each RPC server.

RPC servers are typically monitored using Command Central as services of a broker.

Establish the broker connection using the static Broker name:port definition.

Maintain separate parameter files for each Natural RPC Server instance.

An important aspect of high availability is during planned maintenance events such as lifecycle management, applying software fixes, or modifying the number of runtime instances in the cluster. Using a virtual IP networking approach for broker clustering allows high availability to the overall working system while applying these tasks.

Broker administrators, notably on Linux and Windows systems, have the need to start, ping (for Broker alive check) and stop Broker as well as RPC servers from a system command-line, prompt or from within batch or shell scripts. To control and manage the lifecycle of brokers, the following commands are available with Command Central:

sagcc exec lifecycle start local EntireXCore-EntireX-Broker-<broker-id>

sagcc get monitoring state local EntireXCore-EntireX-Broker-<broker-id>

sagcc exec lifecycle stop local EntireXCore-EntireX-Broker-<broker-id>

sagcc exec lifecycle restart local EntireXCore-EntireX-Broker-<broker-id>

To start an RPC server

To start an RPC server

See Starting the RPC Server for C | .NET | Java | XML/SOAP | IMS Connect | CICS IPIC | CICS ECI | AS/400 | IBM® MQ.

To ping an RPC server

To ping an RPC server

Use the following Information Service command:

etbinfo -b <broker-id> -d SERVICE -c <class> -n <server name> -s <service> --pingrpc

To stop an RPC server

To stop an RPC server

See Stopping the RPC Server for C | .NET | Java | XML/SOAP | IMS Connect | CICS IPIC | CICS ECI | AS/400 | IBM® MQ.

You can also use the command-line utility etbcmd. Example:

etbcmd -b <broker-id> -d SERVICE -o IMMED -m <class/server/service>

All hosts in the NLB cluster must reside on the same subnet and the cluster's clients are able to access this subnet.

When using NLB in multicast or unicast mode, routers need to accept proxy ARP responses (IP-to-network address mappings that are received with a different network source address in the Ethernet frame).

Make sure the Internet control message protocol (ICMP) to the cluster is not blocked by a router or firewall.

Cluster hosts and the virtual cluster IP need to have dedicated (static) IP addresses. This means you must request static IPs from your Network Services group.

NLB clustering is a stateless failover environment that does not provide application or in-flight message recovery.

Only TCP/IP is configured on the network interface that the NLB is configured for.