An Adabas session involves the execution of the Adabas nucleus which controls access/update to a single database. This chapter describes the job control statements needed when executing an Adabas session under each supported operating system. For examples of the Adabas utility jobs, see the Adabas Utilities documentation.

Adabas version uses operating system services to synchronize the start and end of nucleus and utility executions. Only one program can modify the data integrity block (DIB) at a time.

This document covers the following topics:

The services consist of systems-wide ENQ/DEQ macros (SCOPE=SYSTEMS) with major name (QNAME) 'ADABAS'

This feature reliably and efficiently guarantees proper synchronization of DIB updates within a single operating-system image.

If your database resides on disks that are shared among multiple images of the operating system and you run nucleus or utility jobs against the same database on more than one of the system images, you need to ensure that

the system images are installed in such a way that synchronization is effective on all systems where nucleus and utility jobs execute; or

nucleus and utility jobs do not execute concurrently on different system images.

Consult your system programmer for the needed information.

| Warning: If different nucleus or utility jobs updating the same file are allowed to start or terminate on different system images at the same time without proper synchronization, a DIB update may be lost. If this happens, a lock in the DIB may be violated, thereby opening the file to the possibility of destruction due to concurrent unsynchronized updates by utilities. |

The following data sets are required when executing an Adabas session under z/OS.

| Data Set | DD Name | Storage Medium | Additional Information |

|---|---|---|---|

| ADARUN parameters | DDDDCARD | card image | note 1 |

| ADARUN / Adabas messages | DDPRINT | printer | note 2 |

| Associator | DDASSORn or

DDASSOnn |

disk | note 3 |

| ADATCP parameters | TCPIN | card image | This optional dataset is only used with ADATCP, to provide input parameters. See JCL Required for UES and TCP/IP Support (z/OS) for further details. |

| Data Storage | DDDATARn or

DDDATAnn |

disk | note 3 |

| Work | DDWORKR1 DDWORKR4 |

disk | note 4 |

| Recovery Aid log | DDRLOGR1 | disk | note 5 |

| Protection log multiple log 1 multiple log 2 |

DDSIBA DDPLOGR1 DDPLOGR2 |

tape/disk disk disk |

note 6

note 7 note 7 |

| Command log multiple log 1 multiple log 2 |

DDLOG DDCLOGR1 DDCLOGR2 |

tape/disk disk disk |

note 8

note 9 note 9 |

| Abnormal termination | MPMDUMP | printer | note 10 |

| Abnormal termination (if large buffer pools are used) | SVCDUMP | printer | note 11 |

| SMGT dump and snap dump | ADASNAP | printer | note 12 |

| SMGT print file | DDTRACE1 | printer | note 13 |

| Time zone files | TZINFO | disk | note 15 |

If you did not convert the license file to a license load module and added it to the Adabas load library (preferable), you need to add a DD statement for each Adabas and add-on product relating the sequential license file.

For a list of license file names, load modules and DD names, refer to Adabas and Add-on Licenses.

This job includes multiple protection logging, multiple command logging, and Recovery Aid logging:

//NUC099 EXEC PGM=ADARUN //STEPLIB DD DISP=SHR,DSN=ADABAS.ADAvrs.LOAD //DDASSOR1 DD DISP=SHR,DSN=EXAMPLE.ADAyyyyy.ASSOR1 //DDDATAR1 DD DISP=SHR,DSN=EXAMPLE.ADAyyyyy.DATAR1 //DDWORKR1 DD DISP=OLD,DSN=EXAMPLE.ADAyyyyy.WORKR1 //DDPLOGR1 DD DISP=SHR,DSN=EXAMPLE.ADAyyyyy.PLOGR1 //DDPLOGR2 DD DISP=SHR,DSN=EXAMPLE.ADAyyyyy.PLOGR2 //DDCLOGR1 DD DISP=SHR,DSN=EXAMPLE.ADAyyyyy.CLOGR1 //DDCLOGR2 DD DISP=SHR,DSN=EXAMPLE.ADAyyyyy.CLOGR2 //DDRLOGR1 DD DISP=SHR,DSN=EXAMPLE.ADAyyyyy.RLOGR1 //TZINFO DD DISP=SHR,DSN=ADABAS.Vvrs.TZ00 //DDPRINT DD SYSOUT=X //DDTRACE1 DD SYSOUT=X //SYSUDUMP DD SYSOUT=X //MPMDUMP DD SYSOUT=X //ADASNAP DD SYSOUT=X //DDDDCARD DD * ADARUN PROG=ADANUC,DB=yyyyy ADARUN LBP=600000 ADARUN LWP=320000 ADARUN LS=80000 ADARUN LP=400 ADARUN NAB=24 ADARUN NC=1000 ADARUN NH=2000 ADARUN NU=100 ADARUN TNAE=180,TNAA=180,TNAX=600,TT=90 ADARUN NPLOG=2,PLOGSIZE=1800,PLOGDEV=dddd ADARUN NCLOG=2,CLOGSIZE=1800,CLOGDEV=dddd //

where:

| dddd | is a valid device type. |

| vrs | is the version of the product. |

| yyyyy | is the physical database ID. |

If you have UES-enabled your database, you must also include the ICS load library in the STEPLIB:

//STEPLIB DD .... // DD DISP=SHR,DSN=SAG.ICSvrs.LOAD

Note

The library contains conversion objects that are loaded on demand for text

conversions.

If you are connecting your UES-enabled database directly through a TCP/IP link, you must also:

Include the ADATCP library in the STEPLIB:

//STEPLIB DD ....

// DD DISP=SHR,DSN=WCPvrs.LOAD

// DD DISP=SHR,DSN=WTCvrs.LOAD

Note

These libraries are distributed with Entire Net-Work.

If necessary, include the TCPIN DD statement, for example:

//TCPIN DD * ADI=Y ADIHOST=AHOST ADIPORT=4952 /*

The dump produced by MPMDUMP may be too slow for users with very large buffer pools. You may instead elect to use the z/OS SVC dump facility to speed up nucleus dump processing. An SVC dump is triggered by the presence of an //SVCDUMP DD statement in the nucleus startup JCL.

If //SVCDUMP DD DUMMY is specified, and the job is running with APF

authorization, a z/OS SVC dump is produced on the system dump data set, normally

SYS1.DUMPxx. If //SVCDUMP DD DUMMY is

specified and the job is not running with APF authorization, message

ADAM77 is issued and dump

processing continues as if the SVCDUMP DD statement had not been specified.

If //SVCDUMP DD DSN=dsn is specified (with an

appropriate data set name), a z/OS SVC dump is produced on the specified data set.

Note that the SVCDUMP data set needs to be allocated with DCB attributes

RECFM=FB,LRECL=4160,BLKSIZE=4160. Note also that, for APF-authorized

jobs, secondary extents are ignored.

For APF-authorized jobs, the SVC dump title consists of "ADABAS System

Dump" plus additional job name, DBID, and timestamp information in the

form: Job jjjjjjjj DBID

nnnnn

yyyy/mm/dd

hh:mm:ss.hhttmm.

For non-authorized jobs, the title consists of "ADABAS Sys Tx

Dump" plus the additional job name, DBID, and timestamp information.

Note that the timestamp reflects the time at which the SVC dump request was passed to

z/OS -- not the time at which any preceding abend occurred.

The shortest dump processing time occurs when you specify //SVCDUMP DD

DUMMY. This is because the nucleus only needs to wait for the dump to be

captured, not written out to a dump data set. A specification of //SVCDUMP DD

DSN=dsn will give you a shorter processing time

for an APF-authorized job than for a non-APF-authorized job. In both cases, the time

taken for dump processing may actually be longer than with MPMDUMP.

When an SVCDUMP DD statement is included in your JCL, any MPMDUMP DD statement is ignored, unless Adabas detects that it is unable to proceed with SVC dump processing.

If an error is encountered while writing the SVC dump, message ADAM78 appears. If dump writing completes successfully, message ADAM79 appears. For more information on these messages, refer to your Adabas messages and codes documentation.

No error message is produced when a dump can only be partially written. You should therefore ensure that sufficient space is available on the dump data set to accommodate the dump.

When an SVCDUMP DD statement is included in the JCL, but the SVC dump is unable to complete successfully, dump processing reverts to the standard dump options as specified in the JCL via the MPMDUMP, SYSUDUMP, SYSABEND or SYSMDUMP DD statements.

Note

SVC dump processing might be suppressed due to installation SLIP or DAE options.

If dump processing is still required in this case, the relevant MPMDUMP, SYSUDUMP,

SYSABEND or SYSMDUMP DD statement should be specified in the JCL in addition to the

SVCDUMP DD statement.

When the SVCDUMP DD statement is omitted from the JCL, existing dump options, specified via the MPMDUMP, SYSUDUMP, SYSABEND or SYSMDUMP DD statements, continue to operate as normal.

The following notes apply to Adabas startup jobs in various platforms (as described by the notes).

This data set is used to provide the Adabas session parameters.

This data set is used to print messages produced by the control module ADARUN or the Adabas nucleus.

The Adabas Associator and Data Storage data sets. These data sets are mandatory.

n and nn represent the number of the Associator and Data Storage data set, respectively.

If more than one data set exists for Associator or Data Storage, a separate statement is required for each. For example, if the Associator consists of two data sets, DD statements for DDASSOR1 and DDASSOR2 are required.

If less than 10 data sets exist for each, you must use the DDASSOR* and DDDATAR* DD names in the JCL. For example, if the Associator consists of two data sets and Data Storage consists of three data sets, the following names would be used in the JCL: DDASSOR1, DDASSOR2, DDDATAR1, DDDATAR2, and DDDATAR3. If 10 or more data sets exist, the first nine must use the ASSOR* and DATAR* DD names in the JCL and the remainder must use the ASSO* and DATA* DD names in the JCL (dropping the "R" in the DD names). For example, the tenth Associator data set is identified in the JCL using the name DDASSO10, while the third Associator data set in the same JCL would be identified using the name DDASSOR3.

A maximum of 99 physical extents is now set for Associator and Data Storage data sets. However, your actual real maximum could be less because the extent descriptions of all Associator, Data Storage, and Data Storage Space Table (DSST) extents must fit into the general control blocks (GCBs). For example, on a standard 3390 device type, there could be more than 75 Associator, Data Storage, and DSST extents each (or there could be more of one extent type if there are less for another).

So, the range of Associator DD names that can be used in JCL is DDASSOR1 to DDASSOR9 and DDASSO10 to DDASSO99. And the range of Data Storage DD names that can be used in JCL is DDDATAR1 to DDDATAR9 and DDDATA10 to DDDATA99.

The Adabas Work data sets. The WORKR1 data set is mandatory. If you have Adabas Transaction Manager installed, an additional work data set, WORKR4 is also mandatory.

we recommend running the nucleus with DISP=OLD for the WORKR1 data set as a way of preventing two nuclei from writing to the same WORK data set and corrupting the database. This could otherwise happen if the ADARUN parameters FORCE and IGNDIB are improperly used.

Work part 4 of WORKR1 is no longer supported if you have Adabas Transaction Manager installed. Instead, you should use the WORKR4 data set. WORKR4 is used for the same purpose as Work part 4, but it can be used in parallel by all members in a cluster. It is used to store the PET (preliminary end-of transaction) overflow transactions (those that cause a work overflow) of a database or of all members in a multiplex/SMP cluster.

The WORKR4 data set is a container data set that should be allocated and formatted in the normal way (use ADAFRM WORKFRM), using a block size greater than or equal to WORKR1. WORKR4 can have the same or a different device type than WORKR1. It should be at least as large as the cluster’s LP parameter of the database or cluster. The smaller WORKR1 Work part 1 is, the larger WORKR4 should be. This is because the nucleus must prevent a work overflow due to incomplete DTP transactions, but the nucleus must keep all PET transactions; they cannot be backed out.

If the Adabas Recovery Aid is being used, this logging data set is required.

The data protection log data set. This data set is required if the database will be updated during the session and logging of protection information is desired. This data set is not applicable if multiple protection logging is used.

The data protection log may be assigned to tape or disk. A new data set must be used for each Adabas session (DISP=MOD may not be used). See Adabas Restart and Recovery for additional information.

Multiple (two to eight) data protection log data sets. These data sets are required only if multiple data protection logging is to be in effect for the session.

Multiple data protection logging is activated by the ADARUN NPLOG and PLOGSIZE parameters. The device type of the multiple protection logs is specified with the ADARUN PLOGDEV parameter.

Whenever one of multiple protection log data sets is full, Adabas switches automatically to another data set and notifies the operator through a console message that the log which is full should be copied using the PLCOPY function of the ADARES utility. This copy procedure may also be implemented using the user exit 12 facility as described in the User Exits documentation.

If no command logging is to be performed, this data set may be omitted.

The command log data set. This data set is required if command logging is to be performed during the session. Command logging is activated by the ADARUN LOGGING parameter.

Multiple (two to eight) command log data sets. These data sets are required only if multiple command logging is to be in effect for the session.

Multiple command logging is activated by the ADARUN NCLOG and CLOGSIZE parameters. The device type of the multiple command log data sets is specified with the ADARUN CLOGDEV parameter.

Whenever one of multiple command log data sets is full, Adabas switches automatically to another data set and notifies the operator through a console message that the log which is full should be copied using the CLCOPY function of the ADARES utility. This copy procedure may also be implemented using the user exit 12 facility as described in the Adabas User Exits documentation.

This data set is used to take an Adabas dump including SVC, ID-TABLE and allocated CSA in the event that an abnormal termination occurs.

The line count in the JCL must be set appropriately; otherwise, the dump cannot be printed in its entirety.

The z/OS SVC dump facility can be used to speed up nucleus dump processing if an MPMDUMP is too slow for users with very large buffer pools. We recommend that you specify both an MPMDUMP and an SVCDUMP DD statement in your JCL to ensure that one of the dumps is produced when needed. If an SVCDUMP DD statement is included, an SVC dump is created if possible and the MPMDUMP DD statement is ignored. Should problems arise during processing of the SVC dump, an MPMDUMP will be taken, but only if the MPMDUMP DD statement is also specified in the JCL. For more information, read Using the z/OS SVC Dump Facility.

This data set is used under z/OS to take an Adabas dump (SMGT,DUMP) or snap dump (SMGT,SNAP) when using the error handling and message buffering facility.

This data set is used when the PIN output is to be directed to DDTRACE1 rather than DDPRINT as specified by the user in ADASMXIT when using the error handling facility. If DDTRACE1 has not been specified in the JCL and PRINTDD is set to "NO" in ADASMXIT, output will be lost. The PIN output will not be written to DDPRINT unless "YES" is specified.

The TZINFO data set is required when defining fields with the TZ option and using the user session open parameter TZ. This data set is a library or partioned data set containing information on local time offsets and daylight savings time offsets and its transition times.

Although the normal mode of operation is multiuser mode, it is also possible to execute Adabas together with a user program or Adabas utility in the same region.

For single-user mode, you must include the Adabas nucleus job control that you use along with the job control for the utility or user program.

The Adabas prefetch option cannot be used in single-user mode; however, single-user mode must be used when running a read-only nucleus and an update nucleus simultaneously.

Some information within an Adabas database is user-related and must be retained from session to session. One such kind of information is ET data records; another is the priority value assigned to a user.

A set of user-related information can be stored in a profile table. The values stored in this table are read at OPEN time and assigned to the user. The direct call user must OPEN the Adabas session with the proper call; that is, as an ID user with an ETID in the Additions 1 field of the Adabas control block. For Natural users, the profile table is identified by the Natural ETID.

The associated fields are user-related timeout and threshold values, and the OWNERID for multiclient fields. One record per user is stored. The profile table is maintained using Adabas Online System.

The user-related values shown below are currently stored in the profile table.

| Value | Description |

|---|---|

| PRIORITY | User's priority (0-255) |

| TNAA* | Access user non-activity time |

| TNAE* | ET user non-activity time |

| TNAX* | EXU/EXF user non-activity time |

| TT* | Transaction time threshold |

| TLSCMD* | Sx command threshold |

| NSISN* | Maximum number of ISNs per TBI element |

| NSISNHQ* | Maximum number of records held by user |

| NQCID* | Maximum number of active command IDs per user |

| OWNERID | Owner ID for multiclient file access |

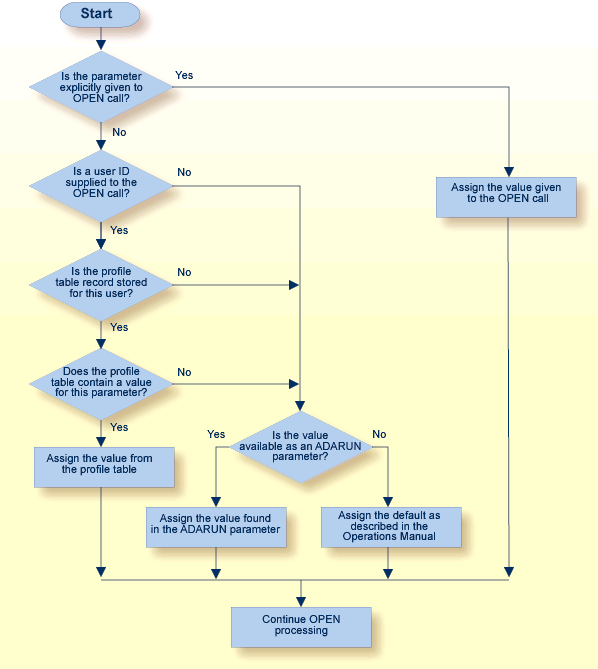

* The decision sequence for determining the values for a user at the time of an open call is shown in Managing the User Profile.

Adabas Online System (AOS) must be used to maintain the profile table. See the Adabas Online System documentation for detailed information about managing the profile table.